Artificial Intelligence is quickly evolving to be more of an aiding technology to a decision-maker on its own in industries. AI is becoming more and more autonomous in healthcare diagnostics, finance, and security in the country. This change has created a worldwide imperative to develop a system of governance that will be able to manage risks and at the same time facilitate innovation. The AI-regulation race is not a mere issue of laws, it is a matter of defining the future of relations between humans and AI.

Why is AI Governance now urgent?

With the increasing power of AI systems, the question of whether they can make independent decisions becomes a matter of concern. Lack of proper governance would result in unintended consequences of such systems that may affect millions of people.

Increasing Regulation Desire.

The AI systems are now firmly integrated into the most important areas such as healthcare, banking, and transportation. These systems usually rely on complicated algorithms upon which they make decisions, which can not be easily comprehended. This is not open and thus it is hard to detect errors or biases. Additionally, when AI systems learn and develop, over time their behavior may change, thus becoming more hard to predict. This brings a great necessity of dominance structures that would provide accountability and control.

Uncontrollable AI dangers.

Uncontrolled, AI can be extremely dangerous. They are biased decision making, invasion of privacy and even security threats. The development of autonomous weapons and AI-based cyberattacks is increasingly becoming an international issue of concern. Moreover, AI systems have the ability to enhance misinformation or influence the opinion of the population, which may have considerable consequences on society. These risks have to be low to control, which can be achieved through governance and use in an ethical manner.

International Competition to develop AI Laws.

The world is competing to establish AI regulations that support their national strategies. It is not just a race of safety but also of technological supremacy.

Various Regulatory Models.

Various countries are assuming distinct policies regarding AI. Europe has prioritized strict laws and protection to users and wants to develop a secure digital space. The US is an innovation-centered country where the companies are more free to produce AI technologies. In the meantime, China is both developing quickly and maintaining high levels of state control, which guarantees that the country moves in the right direction. These contrasting models underscore the intricacy of establishing an integrated global framework.

Effect on World Power.

AI governance is becoming a means of geopolitical power. The countries that establish benchmarks in the regulation of AI can force changes in the global markets and technologies. This will bring a competitive factor in which countries will strive to not only lead in innovation but also in rule-making. Consequently, international relations and economic strategies are becoming shaped by AI laws.

Principle Principles of AI Governance.

In spite of the local variations, the majority of AI governance models are constructed based on a common range of principles.

Transparency and Explainability.

Transparency will make sure that humans can comprehend the AI systems. Explainable AI enables users and regulators to understand the way decisions are made. This is essential in such industries as healthcare and finance, where life-changing decisions might be made. In the absence of transparency, there is a very low level of trust in AI systems.

Responsibility and Accountability.

Responsibility determines the person or persons that are responsible in case of the failure of AI systems or harm. This can be through developers, companies or users and responsibility should be put in order. This aids in implementing rules and regulations and in making sure that ethical considerations are upheld in the AI lifecycle.

Equity and Prejudice.

AI systems should be in such a way that they are fair to everybody. Algorithms may also be biased and therefore discriminate particularly during hiring, lending or law enforcement. Governance structures seek to isolate and eradicate such biases and treat all the users equally.

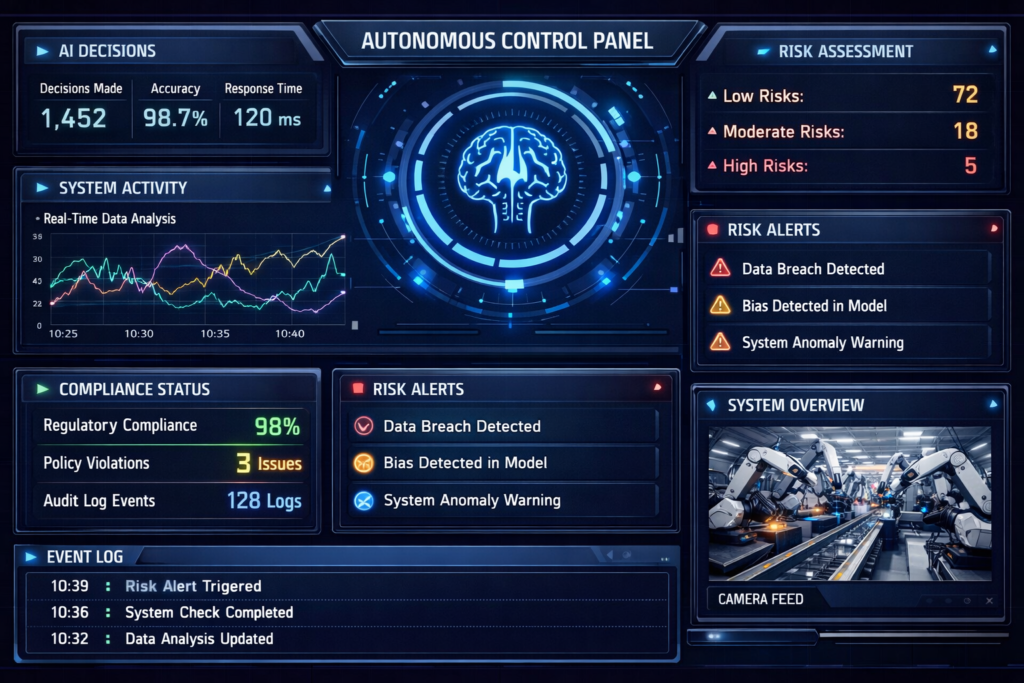

Regulation of Autonomous Systems is a Harder task.

Although the necessity of AI governance has been established, it is extremely hard to establish effective regulations.

Lightning-speed Technological Development.

The development of AI technologies is much more rapid than law. By the time a regulation is enacted, the technology can have easily moved forward past the regulation. This poses a challenge to policymakers in the development of laws that would be relevant in the long term.

No International Co-ordination.

The question of how AI is to be regulated is not a unanimous one. The priorities of different countries vary, contributing to fragmentation of regulations. Such lack of coordination renders it hard to apply rules across borders particularly to global tech companies.

Complexity of Ethics and Law.

It is not simple to establish ethical guidelines of AI. Fairness or acceptability may be different in different cultures and societies. Moreover, it may be complex to allocate legal accountability to AI decisions, particularly in instances where systems are autonomous.

Company involvement in AI Governance.

The role of the governance of AI is significant as it is developed by the private organizations that are the first to develop the AI.

AI Policies Internally.

A lot of corporations are formulating their own ethical code of use of AI. Such policies usually comprise transparency, equity, and protection of data standards. Internal governance systems can help in maintaining responsible AI implementation at companies prior to the implementation of external regulations.

Cooperation with Governments.

The key to effective AI governance is collaboration between companies and governments. Technical expertise is provided by organizations and regulatory frameworks created by governments. This collaboration aids in coming up with practical and enforceable policies that will address the real world challenges.

Conclusion

The future of AI governance is establishing the basis of a new world where machines have an important role in decision making. It is difficult to strike the right balance between innovation and responsibility. As nations strive to dominate in the field of AI developments, the aspect of collaboration and ethics is paramount. Well established governance structures will put AI into the hands of humanity in a safe, fair and transparent way.

Frequently Asked Questions (FAQs)

1. What is AI governance?

The concept of AI governance is a set of structures, rules, and laws that direct the creation and proper utilization of artificial intelligence.

2. It is noteworthy why AI should be regulated.

It will minimize risks, misuse, unfairness, and individuals will not be targeted by the harmful decisions of AI.

3. What are autonomous systems?

These are AI-based systems that can make decisions and execute tasks without the involvement of humans.

4. Who is responsible for AI decisions?

According to the design of the system and its usage, responsibility can be assigned to developers, companies or to users.

5. Which is the future of AI governance?

It will most probably entail international collaboration, uniform rules and extensive surveillance mechanisms.